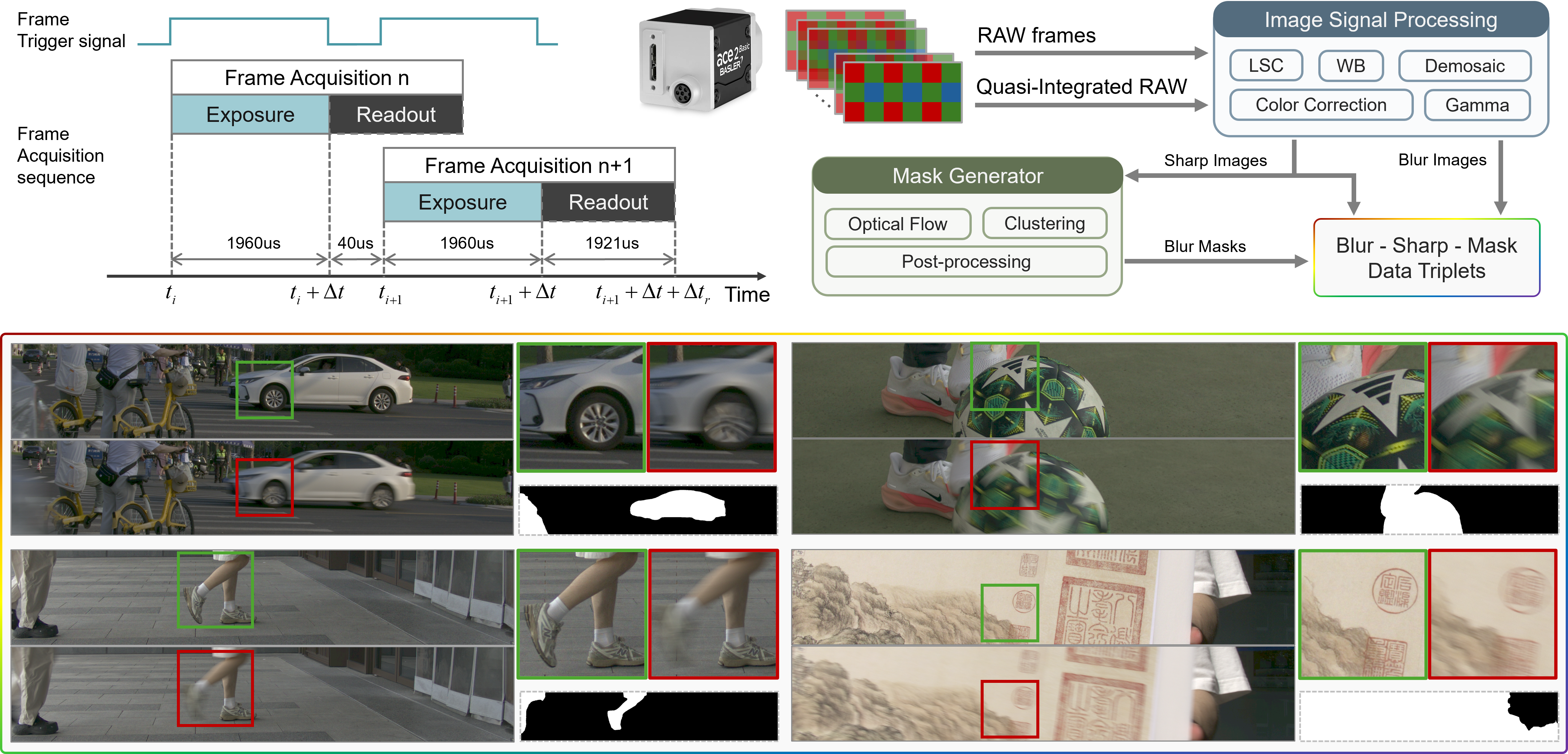

for Real-World Local Motion Deblurring

real-world local object motion blur captured by a Sony camera.

We demonstrate that the physical continuity of accumulated short exposures is the deciding factor for a deblurring network's ability to generalize to real-world scenarios. Frame interpolation cannot bridge the domain gap from large exposure gaps, as its synthesized data distribution fundamentally differs from the physical photon-integration process of a real camera.

Our OMDNet employs an asymmetric training strategy to leverage auxiliary data unavailable at test time. Multi-frame optical flow supervision significantly enhances complex motion modeling, while integrating ground-truth data into the training output (Adaptive Gated Fusion Mechanism) improves blur-region gating accuracy during inference.

Hover over the images to see the blurry inputs.

Scope of Model Capabilities

Global Motion Blur: Our released model is currently ineffective for severe camera motion but can adequately handle slight camera shake combined with standard object motion.

Boundaries of Local Motion Deblurring: As shown in the dynamic motion clip below, reconstruction artifacts tend to increase as the motion magnitude increases. (Blurry inputs are real-captured by our industrial camera with 20ms exposure.)

Generalization to Smartphone Images: Currently, our model cannot process these effectively. Due to the complex multi-frame strategies employed by modern smartphone ISPs, the resulting single blurry output no longer follow the data distribution of a single-exposure physical blur.

Further Utilization of the Dataset

The almost continuous frame sequences provided in this dataset open up possibilities for broader explorations beyond standard deblurring tasks. For instance:

- Enabling networks to generate a single sharp output image from a multi-frame sequence with varying exposure times, or further jointly optimizing the strategy of auto-exposure and ISO settings across multiple frames during capture.

- Enhancing network generalization to effectively process single blurry image output by any modern imaging device, regardless of whether they strictly conform to the physical laws of single-exposure.

- ...

Single-Frame Deblurring Capability vs. Blur Characteristics💡

We observe that deblurring performance is correlated with both absolute blur (in pixels) and relative blur (displacement relative to the object's own size). This phenomenon may be inherently tied to the network's effective receptive field/processing capacity and the degree of texture aliasing.

For instance, consider a large and a small windmill in the same image. If the large windmill's angular velocity is half that of the small one, and its radius is twice as large, the trajectory length swept by their outer blades within a single exposure is identical. However, the visual blur is noticeably more severe on the small windmill. When the exposure time increases to a critical node t, the small windmill's blur may become completely unrecoverable. Conversely, the large windmill can be downsampled by a factor of 2, making it equivalent to the small windmill at time t/2 for the network, thereby allowing successful restoration. This failure time node t is also highly dependent on the width of the windmill blades.

We hope this work encourages systematic discussions and definitions regarding the relationship between single-image deblurring network capabilities and specific blurry image characteristics, which would better guide future dataset construction, network design, and training strategies.

Rethinking the Dataset Paradigm: Must Ground Truth be the Physical "Truth"?

Whether utilizing a coaxial beam splitter system or our proposed approach, ensuring the sharpness of the Ground Truth necessitates a very short exposure time. In urban nightscapes, this creates an inherent conflict between signal-to-noise ratio and dynamic range. While High Dynamic Range (HDR) techniques are traditionally employed to balance exposure, capturing a GT that simultaneously features well-preserved highlights (like neon signs), clean shadow details, and absolute motion-free sharpness in dynamic scenes remains a formidable challenge.

This leads us to a paradigm shift: perhaps what we truly need for effective network training is a perceptually "pleasing" GT, rather than a strictly physical, single-exposure capture.

Ground Truth does not have to be the 'truth'.

@inproceedings{yu2026omoblur,

title = {OMoBlur: An Object Motion Blur Dataset and Benchmark for Real-World Local Motion Deblurring},

author = {Yu, Dingchuan and Li, Jiatong and Zhou, Jingwen and Zhuge, Zhengyue and Chen, Yueting and Li, Qi},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

year = {2026}

} Google Drive

Google Drive

Hugging Face

Hugging Face

Baidu Netdisk

Baidu Netdisk